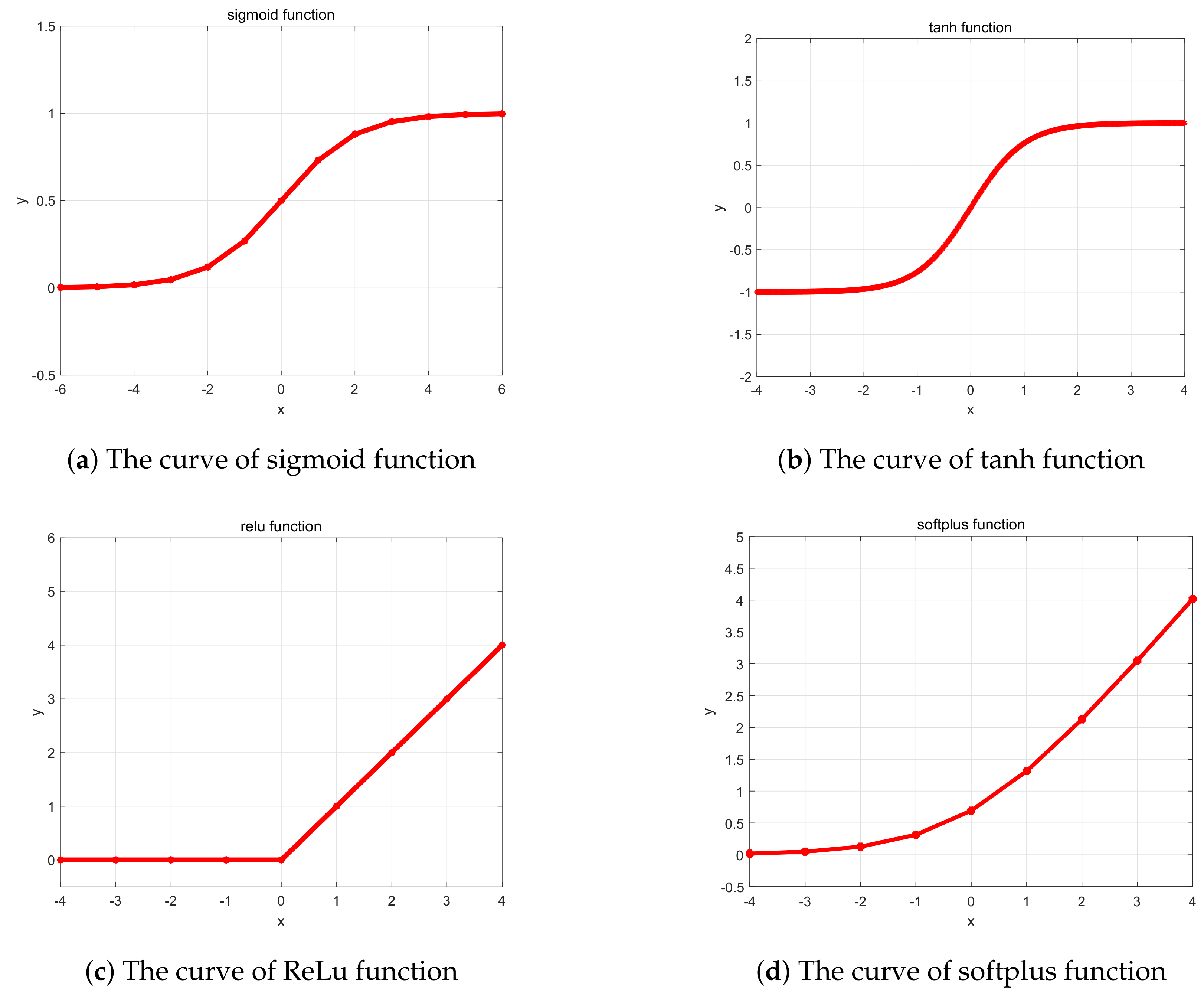

ReLU is the activation function that is commonly used in many neural network architectures because of its simplicity and performance. Compared to ReLU, the Swish activation function is smooth and continuous, unlike ReLU which has a sharp change in direction at x 0. This guide describes these activation functions and others implemented in MXNet in detail.Īctivation functions introduce non-linearities to deep neural network that allow the models to capture complex interactions between features of the data. The output of the swish function approaches a constant value as the input approaches negative infinity, but increases without bound as the input approaches infinity. You may want to try hand-designed activations like SELU or a function discovered by reinforcement learning and exhaustive search like Swish. However, if you have a working model architecture and you’re trying to improve its performance by swapping out activation functions or treating the activation function as a hyperparameter, then Unless you’re trying to implement something like a gating mechanism, like in LSTMs or GRU cells, then you should opt for sigmoid and/or tanh in those cells. If you are looking to answer the question, ‘which activation function should I use for my neural network model?’, you should probably go with ReLU. Over the course of the development of neural networks, several nonlinear activation functions have been introduced to make gradient-based deep learning tractable. The nonlinearities that allow neural networks to capture complex patterns in data are referred to as activation functions. Real-time Object Detection with MXNet On The Raspberry Piĭeep neural networks are a way to express a nonlinear function with lots of parameters from input data to outputs.Deploy into a Java or Scala Environment.Image Classication using pretrained ResNet-50 model on Jetson module.Optimizing Deep Learning Computation Graphs with TensorRT.Running inference on MXNet/Gluon from an ONNX model.Train a Linear Regression Model with Sparse Symbols.RowSparseNDArray - NDArray for Sparse Gradient Updates.Modifying default parameters allows you to use non-zero thresholds, change the max value of the activation, and to use a non-zero multiple of the input for values below the threshold. With default values, this returns the standard ReLU activation: max(x, 0), the element-wise maximum of 0 and the input tensor. CSRNDArray - NDArray in Compressed Sparse Row Storage Format Applies the rectified linear unit activation function.An Intro: Manipulate Data the MXNet Way with NDArray.Appendix: Upgrading from Module DataIter to Gluon DataLoader.Automatic differentiation with autograd.Read through the paper and check in what cases did swish perform better.ģ. For example, we can use swish and ReLU to train different models to increase the variety for ensembling.Įxperiments with SWISH activation function on MNIST dataset (Medium)Ģ. There is one glaring issue to the Relu function. This is mostly due to how fast it is to run the max function. Overall, I think swish is still a good choice considering activation function selection is task dependent. This simple gatekeeping function has become arguably the most popular of activation functions. Instead, popular CNNs, like ResNet, DenseNet and Mobilenet, perform better when using swish according to the paper. It should be mentioned that I used only shallow networks in toy experiments, which are not representative. However, swish usually had lower training accuracy/loss. I tried several configurations, e.g., w/ and w/o batch norm, ReLU always outperformed swish in terms of validation accuracy. Unfortunately, ReLU beat swish on both validation and training data. In this experiment, we used SGD optimizer for all models and trained longer to see if swish can make a comeback.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed